When I add files to S3 for a user, I create a new folder with random 32/64 bit key within the initial folder for that user, then add the file to that newly created folder.

I assign an initial folder name from a random 64 bit key to each user. Even PUT, DELETE, GET, PULL would go through my own api before making changes to files on the S3 and updating the DB. To keep track of a users files, i’d have to have a db with all the locations matched to a user. Each file follows the same process of generating a new random folder… I have a db somewhere, that takes a file to be placed into S3, creates a random 64 bit key, checks it doesn’t clash, then creates that folder within a bucket, and then add the file to that folder.

The more restrictive you can be about who sees what files, the better. I guess the most obvious requirement is that files are kept as secure as possible, but have to be accessible via the web.

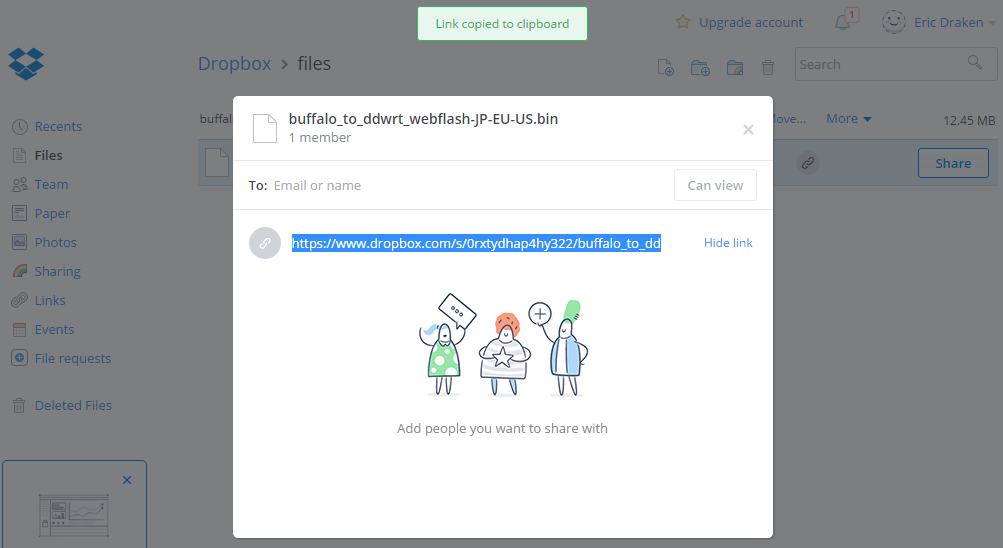

Let’s say i’m using AWS S3 for file storage. I have an app already, and am looking at implementing a fileserver for different users that they can access across multiple devices, much like Dropbox.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed